- HubSpot Community

- HubSpot Developers

- APIs & Integrations

- Re: Post Listing Module Causing Google Crawl Errors

APIs & Integrations

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Jan 22, 2018 9:45 AM

Post Listing Module Causing Google Crawl Errors

We are having issues with the Post Listing module lately. Google Search Console is finding crawl errors for all posts linked in the listing. The links behave normally when clicked, but they are sending Google to a broken page.

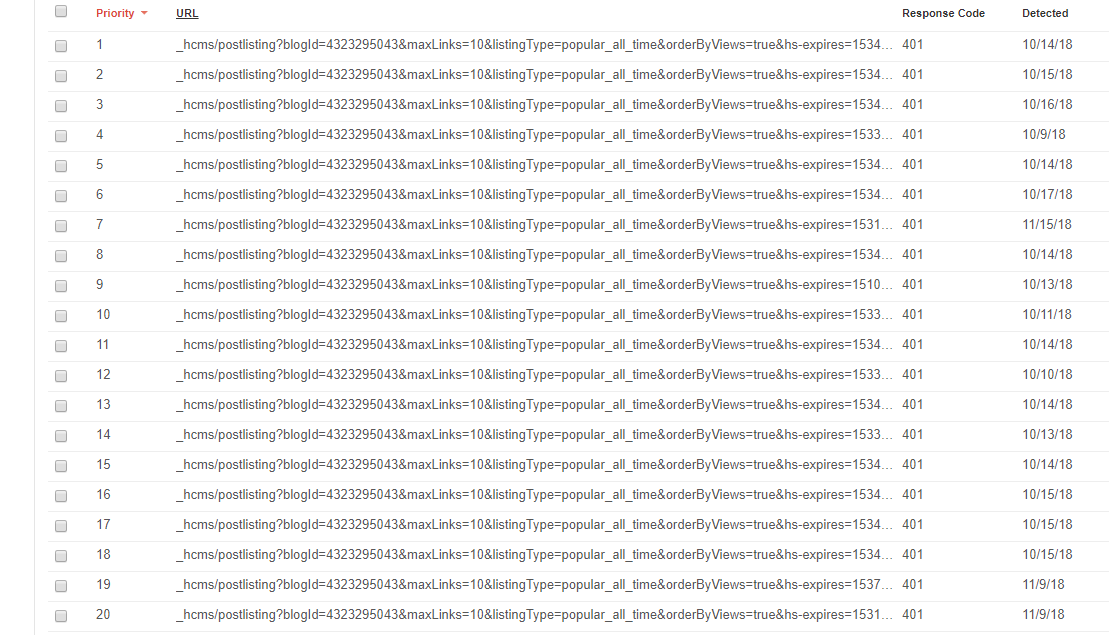

The Google crawl errors look like this:

_hcms/postlisting?blogId=3768556641&maxLinks=10&listingType=recent&orderByViews=false&hs-expires=1511723212&hs-version=1&hs-signature=AIj1bPt_dIP8UrRND2Yq1tRZne7VjHK0Jw

401

Linked from: https://www.hillandgriffith.com/concrete-casting-news/release-application-video

These all lead to a blank page that just says “expired”

If I search the source, these broken links aren’t present. If I use developer tools to search the source, they are. So, these are generated by javascript. Has anyone else run into this problem and fixed it? The only solution I can think of is abandoning the hubspot module and just writing a new one - the custom made ones I have on other sites don’t produce this issue. I’d like to avoid this because we have a lot of templates for this site each needing a listing from a different category - it’d be great if HubSpot could just fix the issue with the standard module.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Nov 26, 2018 8:22 PM

Post Listing Module Causing Google Crawl Errors

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oct 29, 2018 12:49 PM

Post Listing Module Causing Google Crawl Errors

Hi there,

I'm having issues with this as well. I see that up until October 4th, these pages from popular posts etc were being blocked, and something must have changed on the fourth?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oct 4, 2018 1:13 PM

Post Listing Module Causing Google Crawl Errors

Hi all,

Thanks for your patience here. I dug into this with the team, and we've removed these resources from the robots.txt files by default and set the x-robots-tag: none Response Header (Equivalent to noindex, nofollow) on the _hcms/postlisting requests as documented by Google. This should resolve these errors in the console going forward.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Oct 11, 2018 9:02 AM

Post Listing Module Causing Google Crawl Errors

I just got a new batch of alerts from Google Search Console related to these errors.

Googlebot couldn't crawl your URL because your server either requires authentication to access the page, or it is blocking Googlebot from accessing your site. Learn more

Here is an example of a link they are

https://blogs.psglearning.com/_hcms/postlisting?blogId=4302585742&maxLinks=10&listingType=recent&ord...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 20, 2018 2:52 PM

Post Listing Module Causing Google Crawl Errors

Hi @Patrick_Dowling and @Alexander_Hultquist,

Let me dig into this with the team. I'll update this thread when I have more information.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 14, 2018 8:58 AM

Post Listing Module Causing Google Crawl Errors

I had a bunch of errors (soft-404) on /_hcms/perf paths. In my case the record in robots.txt looked like this;

Disallow: /_hcms/perf/

This does not however match /_hcms/perf (without the trailing slash). So I changed this to;

Disallow: /_hcms/perf

Which actually does match the url with the trailing slash.

I suppose your problem with the /_hcms/postlisting url is caused by a similar record (although in my case Hubspot adds this url by default to the robots.txt)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 12, 2018 5:12 PM

Post Listing Module Causing Google Crawl Errors

We are seeing this error as well. This is the URL that is showing an error:

https://www.hq-digital.com/_hcms/postlisting?blogId=5735971411&maxLinks=200&listingType=popular_all_...](https://www.hq-digital.com/_hcms/postlisting?blogId=5735971411&maxLinks=200&listingType=popular_all_...)

Any help is appreciated!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 8, 2018 8:02 AM

Post Listing Module Causing Google Crawl Errors

I am seeing an error on similar pages in my search console as well. It is listed as "Indexed, though blocked by robots.txt". Is it important for these pages to be indexed? All my posts have a similar URL in the source but the 'hs-signature' value changes depending on the page. What is the 'hs-signature'?

I couldn't find the page that generated this link but here is the offending URL:

https://blogs.psglearning.com/_hcms/postlisting?blogId=4302585742&maxLinks=10&listingType=recent&ord...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 31, 2018 1:49 PM

Post Listing Module Causing Google Crawl Errors

Hi @Josh_Loewen,

Can you give me an example page where you're seeing these links? It'd also be helpful if you could include an example of a full URL being included in the search console.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Apr 5, 2018 10:20 AM

Post Listing Module Causing Google Crawl Errors

Hi @Luciano_Tolomei,

Thanks for your patience here; we've recently pushed out a fix that should prevent Google Search Console from picking up these links. Let me know if you're still seeing issues going forward (allow ~5 hours for all caches to clear).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 28, 2018 12:55 PM

Post Listing Module Causing Google Crawl Errors

I'm still seeing one in my search console. www.example.com/_hcms/perf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 28, 2018 2:57 PM

Post Listing Module Causing Google Crawl Errors

Hi @Luciano_Tolomei,

Sorry for the delay here, I appreciate your patience. I'm currently digging into this with the team. I'll update this topic when I have more information.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 23, 2018 1:27 PM

Post Listing Module Causing Google Crawl Errors

Hi @Luciano_Tolomei,

Can you send me a link to the blog listing page you're referring to?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 23, 2018 2:09 PM

Post Listing Module Causing Google Crawl Errors

every page in site www.dmep.it

on footer we have the recommended articles beta module

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 21, 2018 1:58 PM

Post Listing Module Causing Google Crawl Errors

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 25, 2018 2:18 PM

Post Listing Module Causing Google Crawl Errors

Hi @mykeamend,

Sounds good, glad to hear this issue has been fixed. If you see these errors again feel free to post here and I’ll dig in with the team.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 24, 2018 1:48 PM

Post Listing Module Causing Google Crawl Errors

Hi @mykeamend,

Can you send me a link to your blog listing page so I can take a closer look?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 24, 2018 2:10 PM

Post Listing Module Causing Google Crawl Errors

Derek,

Actually, the issue is no longer happening. My best guess is that they fixed it in this last day or so. I’ll tell search console these have been fixed and see if they pop back up again. Hopefully they won’t.

Thank you.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 24, 2018 2:04 PM

Post Listing Module Causing Google Crawl Errors

It is here. https://www.hillandgriffith.com/concrete-casting-news

Using developer tools, you can find the offending links. They are not in the source code otherwise. Google is finding them and I suppose that is our main problem with this.

The offending blog listings are using the standard hubSpot Post Listing Module.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content